Your AI Already Knows

Your Team's Patterns

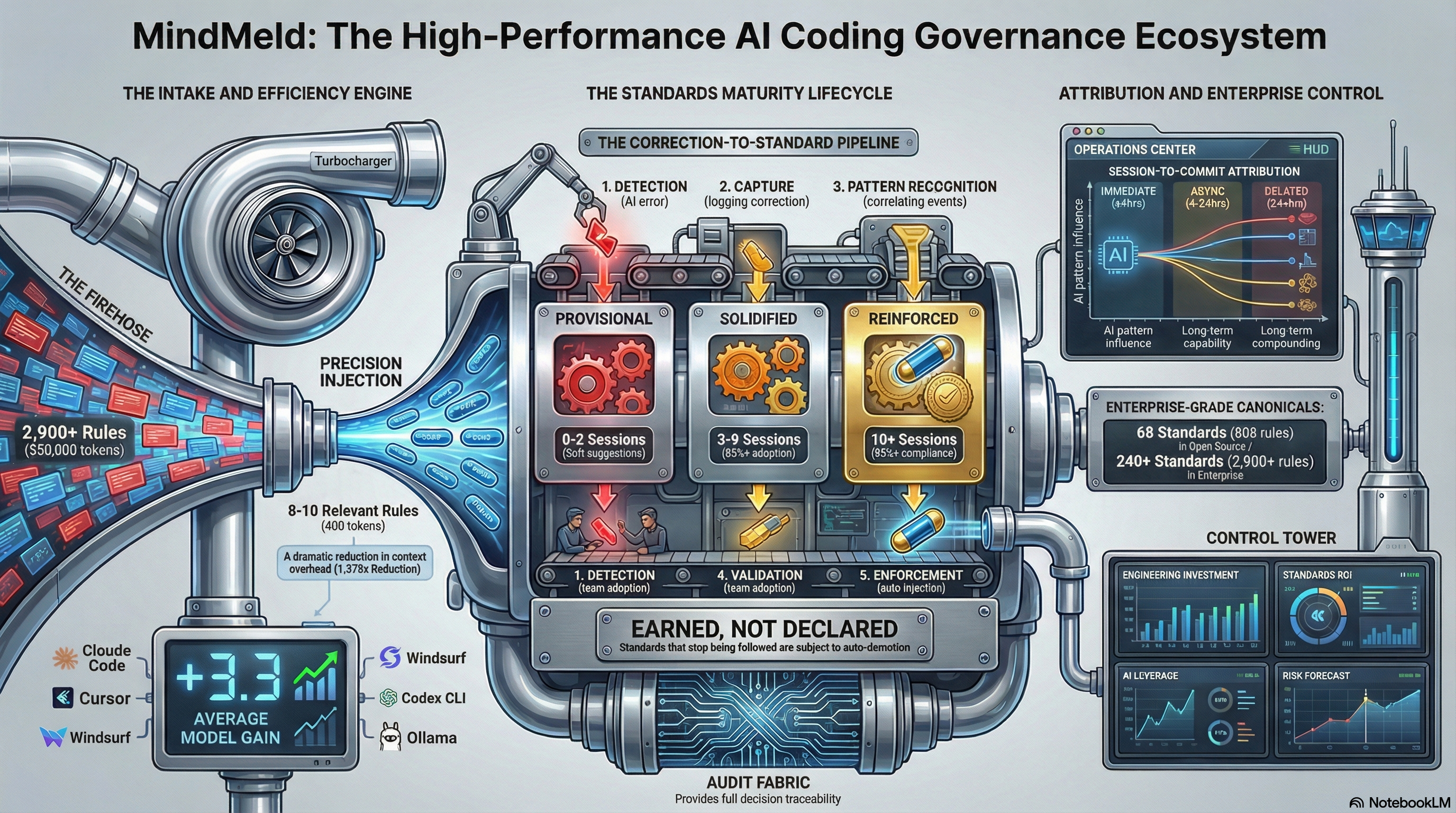

MindMeld captures what your team knows, proves what works through real usage, and makes sure every AI coding session follows your standards — automatically.

Your Team's Knowledge Evaporates

Every day, your engineers correct AI output. "Don't use connection pools in Lambda." "We chose OIDC because of the security audit." "Deploy migrations before code, always." Those corrections are institutional knowledge — and right now, they vanish after every session.

Without MindMeld

- AI makes the same mistakes every session

- New hires repeat every lesson from scratch

- Senior leaves → knowledge leaves

- No proof any of this is improving

With MindMeld

- AI follows your patterns from the first line

- New hires get the team's knowledge on day one

- Knowledge survives any turnover

- Executive-ready proof it's working

Two Minutes to Set Up. Zero Maintenance.

Install once. MindMeld handles everything else.

Connect

Install the CLI and connect to your AI coding tool. Works with Claude Code, Cursor, Windsurf, Cline, Codex CLI, Aider, Ollama — anything that supports MCP.

Learn

MindMeld learns what your team knows and separates signal from noise. Standards that actually matter rise to the top — automatically, without anyone maintaining a wiki.

Govern

Proven standards are automatically enforced in every AI session. Your governance stays current without anyone maintaining it. No stale rules. No manual updates.

What Changes

MindMeld isn't another rules file. It's a system that captures, validates, and enforces your team's engineering knowledge.

Knowledge Survives Turnover

When your senior engineer leaves, their hard-won knowledge stays. Everything your team has learned is preserved, attributed, and ready for the next person. New team members get the full picture from session one.

Model Changes Don't Break You

Model updates silently change AI behavior. Provider switches reset quality to zero. Your standards live outside the model — they survive any change, any provider, any regression.

Standards Earn Their Place

Standards aren't declared by someone writing a wiki page. They're validated through real team usage. What actually works earns trust. What doesn't fades away.

Proof It's Working

Know which AI sessions led to shipped code. See your team converging on proven patterns. Executive-ready reporting built for the CTO presenting to the board — not a developer dashboard with an export button.

Not just code standards.

MindMeld captures code patterns, business decisions, ISMS policies, compliance requirements, and architectural invariants. The full picture of what your engineering organization knows.

Every Model Improved

Same task. Same standards. Six different model families. Zero got worse.

| Model | Before | After | Gain |

|---|---|---|---|

| devstral (24B) | 1/6 | 6/6 | +5 |

| deepseek-coder-v2 (16B) | 1/6 | 5/6 | +4 |

| qwen2.5-coder (14B) | 2/6 | 5/6 | +3 |

| qwen3-coder (30B) | 2/6 | 5/6 | +3 |

| codegemma (7B) | 2/6 | 5/6 | +3 |

| codellama (13B) | 1/6 | 3/6 | +2 |

models improved

avg best-practice gain

models got worse

Task: Write a Lambda + PostgreSQL handler. Scored on 6 best practices. Cloud models (Claude, GPT) and local models (Ollama) both supported.

What You'll See, When

Results compound over time. Here's what to expect.

Week 1

- Standards active in every session

- First corrections captured automatically

- AI outputs align with your existing patterns

- Fewer "no, do it this way" moments

Month 1

- Standards validated by real team usage

- Business decisions captured alongside code

- Team convergence visible in dashboard

- Governance stays current automatically

Quarter 1

- Battle-tested standards across the team

- Clear line from AI sessions to shipped code

- Measurable capability improvement

- Institutional knowledge survives turnover

<2s

Setup to first governed session

240+

Standards · 2,900+ Rules

7+

AI tools supported

0

Manual maintenance required

Built for Both Sides of the Table

For Engineers

The AI already knows your team's patterns. No more correcting the same mistakes. No more onboarding your tools to your codebase. Start coding and it just works.

For Leaders

Proof that AI investment is producing engineers who learn and compound — not engineers who copy and plateau. Impact data, adoption trends, and risk signals built for the board conversation.

From the Equilateral Blog

When the Model Regresses, Your Standards Shouldn't

AMD's VP of Software documented reasoning regression across model updates. Here's why your team's knowledge must live outside the model.

Read →Governance Is Not a Prompt. It's an Authority System.

AWS's own AI coding tool caused a 13-hour outage. Here's why governance must be architectural, not aspirational.

Read →Karpathy's Knowledge Base Is Personal. Here's the Enterprise Version.

His markdown wiki works for one person. MindMeld works at team scale — with proof that knowledge compounds.

Read →Start Free. Scale With Governance.

Code FOUNDER80 auto-applied at checkout. Limited availability.

Contribute your standards back to strengthen the ecosystem and save $50/mo. Your engineers earn attribution for every contribution.

Open Source

Standards injection, no account required

- Session memory

- 1 participant, 3 projects

- Create your own standards

- Cross-platform sync

Pro Solo

Intelligent injection + standards that learn

Founding member rate — first 12 months

- Context-aware standards per session

- Standards that learn and adapt

- 5 participants, 5 projects

- Create your own standards

- Cross-platform sync

Pro Team

Team governance + analytics

Founding member rate — first 12 months

- Standards picker (per-user control)

- Team convergence analytics

- Developer capability tracking

- AI impact attribution

- 10 participants, unlimited projects

- All Pro Solo features

Enterprise — $179/seat/mo

25-seat minimum. Full governance instrumentation: AI impact attribution, team capability analytics, executive reporting suite, SSO, and dedicated support.

Founding member pricing: 80% off for 12 months. After Year 1, prices return to standard rates.

Get started in minutes

Install the CLI. Connect your AI tool. Your team's knowledge is active from the first session.

Setup guides for your tool: